North

America

North

Europe

Central

Europe

Eurasia

Northeast

Asia

Southeast

Asia

Premier News

Articles

Automated real-time statistics

Comprehensive statistics are a requirement of any successful sport. Billiards has had basic statistics in the past, however Premier’s new real-time automated statist...

READ ARTICLE

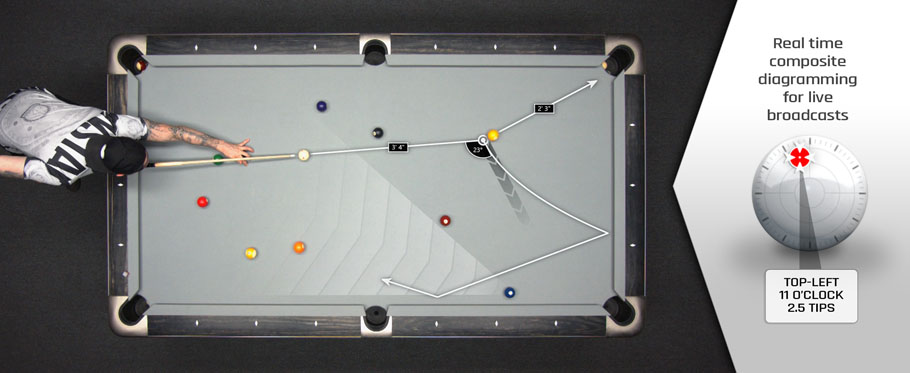

Real-time composite diagramming...

As media and entertainment consumption increases each year, it becomes increasingly difficult for content creators to remain competitive. While most sports have contin...

READ ARTICLE

Team Region Logos

For the inaugural year, the Premier Billiard League will consist of six teams, each representing a major market. The teams are North America, North Europe, Central Eur...

READ ARTICLE

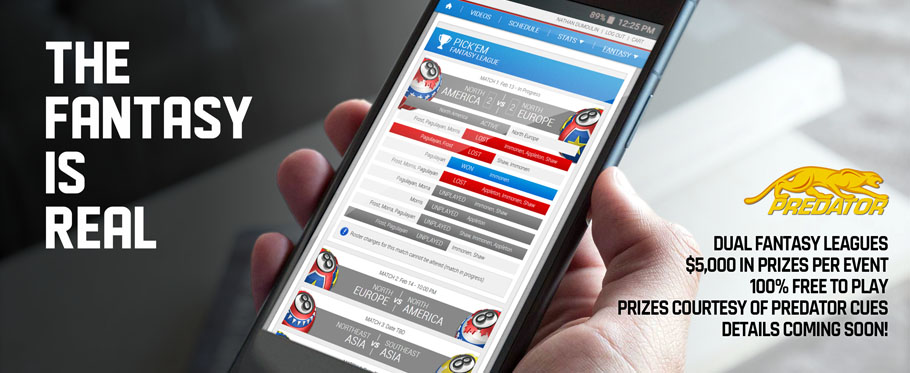

Welcome Sponsors

Here at the Premier Billiard League, we are working towards creating the best billiard broadcasts possible. To be the best, we need the best. From players, to technolo...

READ ARTICLE

Introducing the Premier Billiar...

Despite growing participation over the past two decades, cue sports have failed to adapt and modernize along with other sports, resulting in a significant decline in e...

READ ARTICLEMailing

List

Join our mailing list and get notifications of when we broadcast live, fantasy league deadlines, special video releases, etc. Our mailing list is spam free.